LLMs 25. A Knowledge-Graph-Based LLM Retrieval-Augmentation Implementation Strategy

Quick Overview

This guide covers knowledge-graph-based retrieval-augmentation (Graph RAG) for large language models, explaining why graph RAG is needed versus vector RAG and detailing entity extraction, subgraph retrieval and construction, conversion of graph data into LLM context, and ranking/evaluation strategies.

Graph RAG (Part 1): Knowledge-Graph Retrieval Augmentation for LLMs

A practical learning hub: why Graph RAG exists, what it changes vs vector RAG, and how to implement + rank it.

Graph RAG is a response to a very specific failure mode in “pure” vector-based RAG: semantic similarity is not the same as factual relatedness. Embeddings are great at pulling “nearby” meanings, but they don’t enforce domain constraints. In many real corpora, that gap becomes the root cause of subtle hallucinations: the model isn’t “making things up from nothing”—it’s being fed plausible but wrong context.

Graph RAG tries to fix that by letting a knowledge graph act like a structured constraint layer. Instead of asking “which text chunks are semantically similar to this query?”, Graph RAG asks “which entities and relations are connected to what the user asked, and along which paths?”

Why do we need Graph RAG?

Vector RAG is built on unstructured text. That’s a feature (easy ingestion) and a weakness (weak constraints). If two terms are often used in similar contexts, embeddings may place them close even when substituting one for the other would be incorrect in your domain.

A clean way to think about Graph RAG is: it reduces retrieval ambiguity by anchoring to explicit relations.

When you have a domain vocabulary (“insulated cup” vs “Thermos”, model numbers, part compatibility, compliance categories, drug interactions, org charts), a graph can enforce “allowed” connections. Even when the LLM is powerful, it cannot reliably infer the missing structure if the retrieved context is misleading.

Graph RAG therefore shines when:

- the domain has stable entities/relations (products, parts, people, orgs, policies, events, papers, citations)

- correctness depends on relationships (who did what, belongs-to, used-by, causes, located-in)

- semantic neighbors are dangerous (synonyms that aren’t interchangeable, near-miss entities, similarly named items)

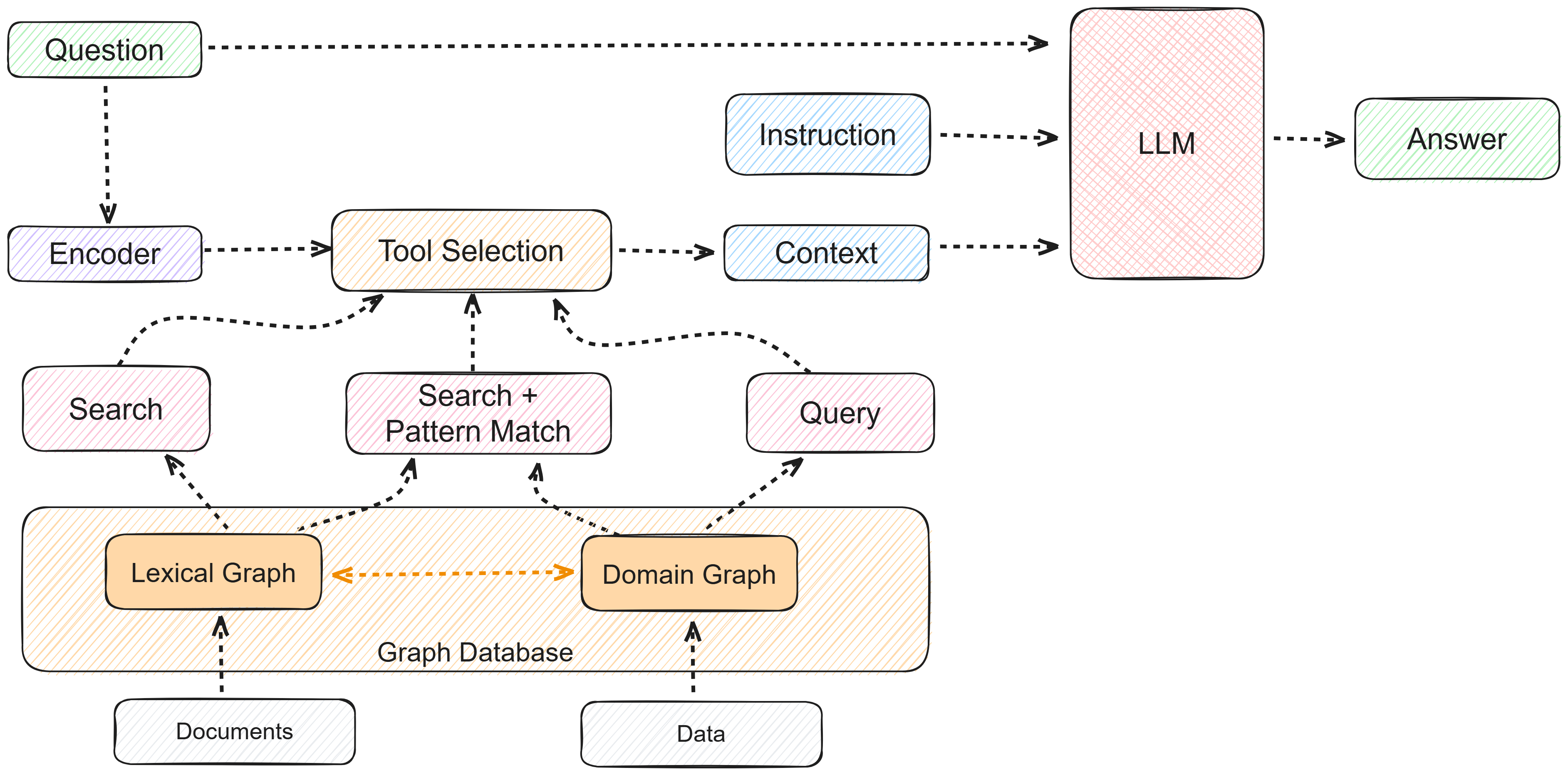

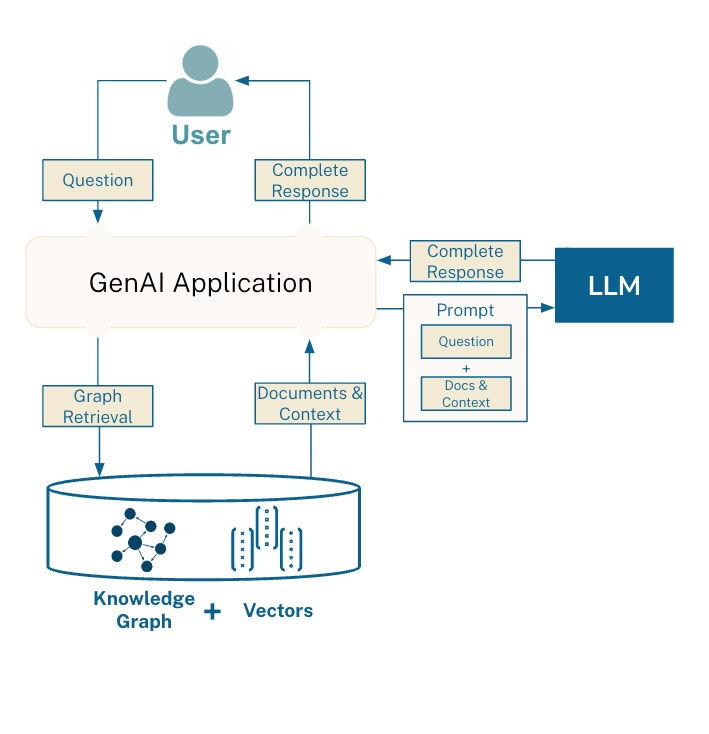

What is Graph RAG?

Graph RAG is a retrieval-augmentation approach where retrieval is driven by a knowledge graph rather than (or alongside) a vector index.

You represent knowledge as triples (or edges):

(subject) —[predicate]→ (object)

Then, given a user query:

- Extract key entities from the query (optionally expand synonyms / aliases).

- Retrieve a relevant subgraph around those entities (k-hop neighborhood, paths, or constrained traversal).

- Convert that subgraph into “LLM-usable” context (triples, paths, short statements).

- Generate an answer that is grounded in that subgraph.

Graph RAG doesn’t replace vector RAG; it typically becomes one retriever among several (graph + dense + sparse + reranker).

Core idea: “subgraph context” beats “similar text” for relation-heavy questions

A helpful mental model:

- Vector RAG: Query → similar passages → answer

- Graph RAG: Query → entities → connected relations → answer

A knowledge graph is like a “super dictionary” where entries are entities, and definitions are relations. The “definition” of NASA isn’t a paragraph; it’s a set of edges: announces → program, discovers → exoplanet, publishes → images, etc.

That’s why Graph RAG is often better at:

- multi-hop questions (“who did X work with after Y?”)

- disambiguation (“Apple the company vs apple the fruit”)

- constraint satisfaction (“only compatible parts”, “only within policy category A”)

A minimal Graph RAG implementation

Below is the simplest shape of Graph RAG. It’s intentionally small: you can expand it later with ranking, hybrid retrieval, and verification.

def simple_graph_rag(query_str, graph_store, llm):

entities = get_key_entities(query_str, llm=llm) # entity extraction

entities = expand_synonyms(entities, graph_store) # alias/synonym expansion (optional)

subgraph = retrieve_subgraph_context(

graph_store,

entities=entities,

max_hops=2,

max_nodes=200,

constraints=None, # e.g., relation whitelist, time filter, doc source filter

)

context_text = format_subgraph_for_llm(subgraph) # triples/paths → readable context

return llm.predict(

"Answer using only the graph context.\n\nQuestion: {q}\n\nGraph context:\n{ctx}",

q=query_str,

ctx=context_text

)

Step 1: entity extraction (LLM-assisted or rules-based)

Entity recall is the first bottleneck. If you miss the right entity, the graph can’t help.

def get_key_entities(query_str, llm=None):

# Option A: LLM extraction (good recall, needs constraints)

# Option B: NER / regex / dictionary matching (faster, safer, lower recall)

prompt = (

"Extract the minimal set of key entities from the question.\n"

"Return as a JSON list of strings. No extra text.\n\n"

f"Question: {query_str}"

)

raw = llm.predict(prompt)

return safe_parse_list(raw)

Step 2: subgraph retrieval (k-hop neighborhood)

def retrieve_subgraph_context(graph_store, entities, max_hops=2, max_nodes=200, constraints=None):

# Pseudocode: BFS/DFS expansion with constraints

visited = set()

frontier = list(entities)

paths = []

for hop in range(max_hops):

next_frontier = []

for node in frontier:

if node in visited:

continue

visited.add(node)

edges = graph_store.get_edges(node, constraints=constraints)

for e in edges:

paths.append(e) # e could be (subj, pred, obj)

next_frontier.append(e.obj)

if len(visited) >= max_nodes:

break

frontier = next_frontier

if len(visited) >= max_nodes:

break

return paths

Step 3: turn graph into LLM-friendly context

Triples are compact, but raw triples can be hard to read. Formatting matters.

def format_subgraph_for_llm(triples):

# Example: group by subject for readability

by_subj = {}

for s, p, o in triples:

by_subj.setdefault(s, []).append((p, o))

lines = []

for s, rels in by_subj.items():

rel_str = "; ".join([f"{p} → {o}" for p, o in rels])

lines.append(f"{s}: {rel_str}")

return "\n".join(lines)

How Graph RAG works in real examples

Example 1: “Tell me about Peter Quill”

A typical pattern is:

entity extraction → ["Peter Quill"] (and maybe alias “Star-Lord”)

graph query (Cypher or traversal) with max depth 2:

Peter Quill —member_of→ Guardians of the Galaxy

Peter Quill —alias→ Star-Lord

Peter Quill —origin→ Earth

LLM answers using only that subgraph, which prevents the model from “filling in” fanfic details.

Example 2: “Tell me events about NASA”

Entity extraction yields ["NASA", "events"] (events is often a hint for time-based filtering).

Subgraph retrieval surfaces event-like relations:

NASA —announces→ future space telescope programs

NASA —discovers→ exoplanet LHS 475 b

NASA —publishes images of→ debris disk

NASA —public release date→ mid-2023

Then the LLM writes a concise “event summary” grounded in those edges.

The key learning: Graph RAG shines when the question maps naturally to relations (“discovers”, “announces”, “publishes”).

How to optimize ranking in Graph RAG

Once graphs get big, naïve traversal pulls in irrelevant branches quickly. Ranking is the difference between “graph retrieval” and “graph noise”.

A practical approach is coarse-to-fine path ranking:

1) Coarse ranking (cheap features, keep top N paths)

You score candidate paths using lightweight features that correlate with relevance. Typical features include:

lexical overlap (character/token overlap, Jaccard)

edit distance (entity name similarity, typo tolerance)

structural signals (hop count, path length, number of relations/entities)

numeric alignment (does the query contain numbers also found in the path?)

This stage is ideal for a fast model like LightGBM because the features are tabular and cheap.

2) Fine ranking (semantic match, keep top 2–3)

After coarse filtering, use a stronger matcher (often a cross-encoder / reranker) to score:

query ↔ path semantic relevance

optionally query ↔ (path verbalization) relevance

You end up with a small set of high-quality paths, which the LLM can reliably use without being drowned in irrelevant edges.

Where Graph RAG generalizes beyond “knowledge graphs”

Even if you don’t have a formal KG, you can borrow the same idea:

Turn documents into entities/relations (light extraction) and build a “soft graph”

Build graphs from metadata (doc → section → heading → paragraph)

Use citation graphs (paper → cites → paper) for research assistants

Use org graphs (person → team → project) for internal copilots

The transferable principle is: structure reduces ambiguity. When embeddings are too permissive, graphs add guardrails.

Suggested next steps for Part 2

If you continue this series, the next “natural” topics are:

Hybrid retrieval: Graph RAG + dense retrieval + sparse retrieval + reranking

Entity recall upgrades: alias tables, canonicalization, disambiguation

Path verbalization + compression: turning paths into minimal evidence

Evaluation: measuring “graph grounding” and path relevance

If you want a **wide banner image** for this Graph RAG hub (16:9), tell me the vib

Comments (0)