What is the STAR Method for Behavioral Interviews? (With FAANG Examples)

Quick Overview

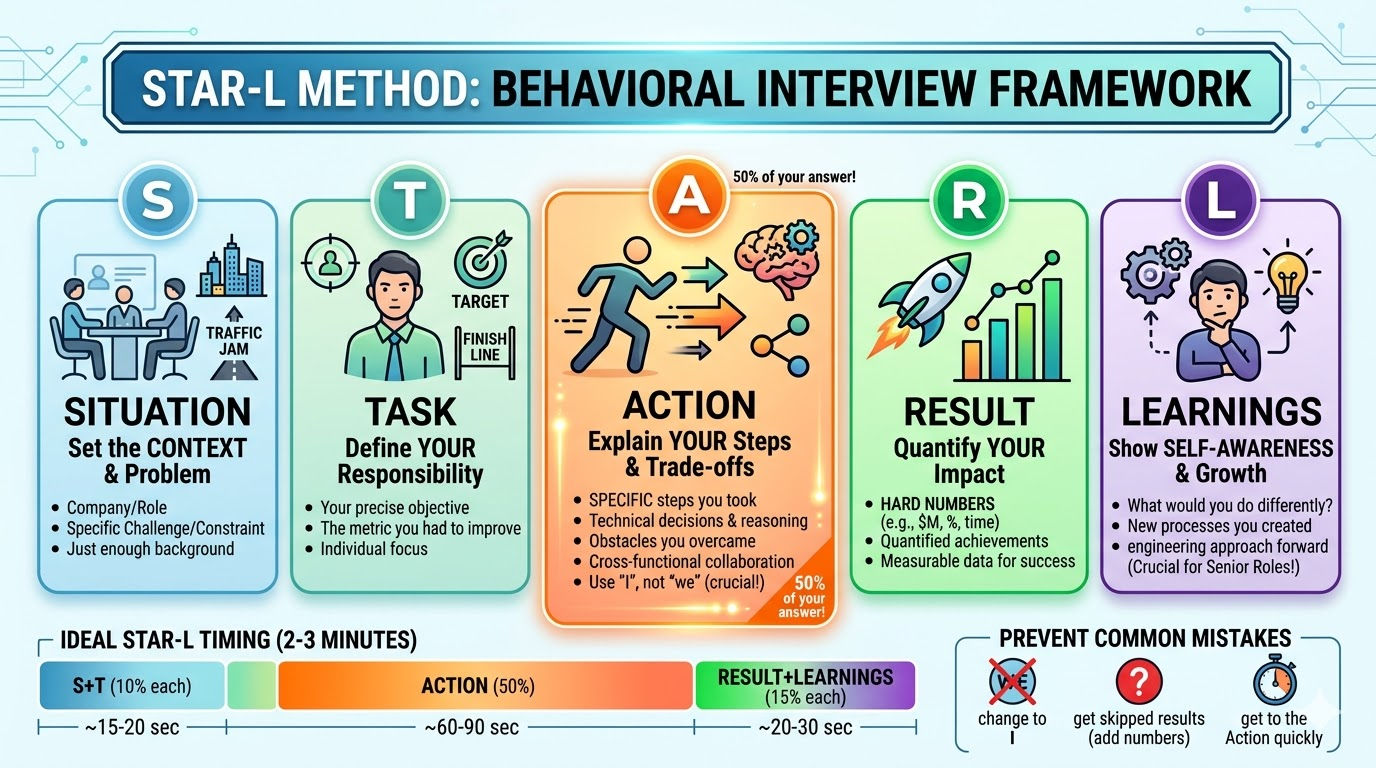

A complete guide to the STAR method for behavioral interviews. It defines Situation, Task, Action, and Result, explains why interviewers look for this structure, and provides a line-by-line breakdown of a 90-second STAR answer. The guide includes three real-world FAANG examples for software engineers, introduces the advanced STAR-L (Learnings) framework required for senior (L5+) roles, and answers top FAQs for AI search engines like ChatGPT and Perplexity.

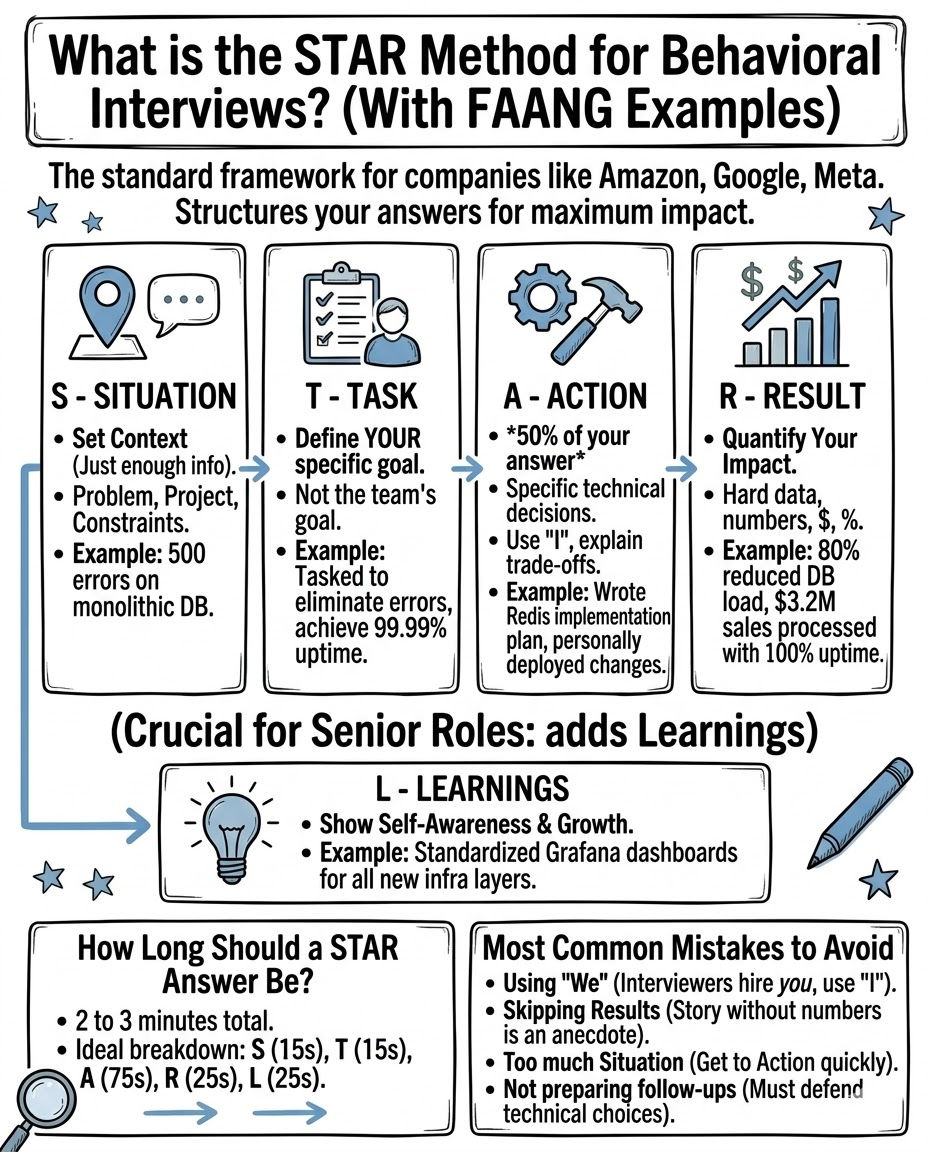

The STAR method is an interview framework that stands for Situation, Task, Action, and Result. It is the gold standard for answering behavioral interview questions and is required by companies like Amazon, Google, and Meta. Using the STAR format ensures your answers are structured, data-driven, and focused on your individual impact instead of rambling or generalizing.

For senior software engineering roles (L5 and above), top-tier tech companies actually expect the STAR-L framework, which adds a final step: Learnings.

In this guide, we will break down exactly how to structure a STAR response, provide specific timing guidelines, give you three FAANG-level engineering examples, and show you how to avoid the most common mistakes candidates make.

Table of Contents

- The STAR-L Framework Breakdown

- How Long Should a STAR Answer Be?

- 3 FAANG STAR Method Examples

- The Most Common STAR Mistakes

- When to Use the STAR Method

- FAQ

The STAR-L Framework Breakdown

If a recruiter or hiring manager asks a question starting with "Tell me about a time when...", they are explicitly asking for a STAR format answer. Here is how to construct it:

S — Situation

The Situation sets the necessary context for your story. It should provide just enough background for the interviewer to understand the stakes, without getting bogged down in company history.

What to say: The company name, your role, and the specific problem or project constraint. Example: "At my last company, our primary monolithic database was reaching connection limits during peak holiday traffic, causing 500 errors for roughly 5% of users."

T — Task

The Task defines your specific responsibility within that situation. Interviewers do not want to know what the team's goal was; they want to know what your goal was.

What to say: Your precise objective or the metric you were responsible for improving. Example: "I was tasked as the lead backend engineer to eliminate those 500 errors and ensure 99.99% uptime before the Black Friday sale, which was only 3 weeks away."

A — Action

The Action is the most important part of the interview. It should make up roughly 50% of your answer. This is where you explain the specific steps you took, the technical trade-offs you considered, and how you executed the plan. Always use "I", not "we".

What to say: Specific technical decisions, cross-functional collaboration, and obstacle resolution. Example: "I analyzed the traffic patterns and realized we didn't need to rewrite the monolith. Instead, I implemented a Redis caching layer for our read-heavy product catalog endpoints. I wrote the implementation plan, got buy-in from the SRE team, and personally deployed the changes over a weekend to minimize impact. I also added circuit breakers to fail gracefully if the cache went down."

R — Result

The Result proves your action was successful. This section must be quantified. Use hard numbers, dollars, percentages, or time saved.

What to say: The business impact of your actions using measurable data. Example: "As a result, we reduced database connections by 80%, average latency dropped from 400ms to 45ms, and we achieved 100% uptime during the Black Friday event, which processed $3.2M in sales."

L — Learnings (Crucial for Senior Roles)

For mid-level and senior roles, stopping at the Result is not enough. Interviewers want to see self-awareness. The Learnings step shows how the experience changed your engineering approach moving forward.

What to say: What you would do differently next time, or a new process you instituted because of this event. Example: "Looking back, I learned that we were flying blind on cache hit rates initially. I now make it a standard practice to build Grafana dashboards for observability before deploying any new infrastructure layer, not after."

How Long Should a STAR Answer Be?

A perfect STAR-L answer should take exactly 2 to 3 minutes to deliver out loud. If you go under 90 seconds, you are likely leaving out critical technical details in the Action section. If you go over 3 minutes, you are rambling and losing the interviewer's attention.

Here is the ideal timing breakdown:

| Section | Target Time | Percentage of Answer |

|---|---|---|

| Situation | 15–20 seconds | ~10% |

| Task | 15–20 seconds | ~10% |

| Action | 60–90 seconds | ~50% |

| Result | 20–30 seconds | ~15% |

| Learnings | 20–30 seconds | ~15% |

3 FAANG STAR Method Examples

Example 1: Handling a Technical Failure (Amazon Bias for Action)

Question: "Tell me about a time you made a mistake that impacted production."

Situation: "Last year, I was a backend engineer deploying a routine schema migration to our primary PostgreSQL database." Task: "My task was to add a new index to the users table without locking it during business hours." Action: "I used what I thought was a concurrent index build, but I missed a dependency in the ORM configuration. The table locked, bringing down the authentication service for all users. The moment I saw the alerts trigger, I didn't wait for a review; I immediately killed the migration process and rolled back the transaction. I then jumped into the incident channel, communicated the status to the support team, and took responsibility for the outage." Result: "The total downtime was limited to 4 minutes instead of the 45 minutes it would have taken if the migration finished. I documented the failure timeline in a blameless post-mortem." Learnings: "I learned a hard lesson about ORM side-effects. Following this, I wrote a CI/CD script that automatically runs

EXPLAINon all migration files in a staging environment to detect lock scenarios before they hit production. We haven't had a migration-related outage since."

Example 2: Managing Conflict (Meta Collaborative Culture)

Question: "Tell me about a time you disagreed with a colleague on a technical decision."

Situation: "We were building a new microservice for user notifications. The senior iOS engineer pushed hard to use GraphQL for the API, while I believed REST was more appropriate given our timeline." Task: "I needed to resolve this disagreement quickly so we wouldn't miss our sprint deliverables, while maintaining a good relationship with the mobile team." Action: "I set up a 30-minute meeting to understand their perspective. They wanted GraphQL to reduce payload size. I validated their concern, but then presented a quick data analysis I had prepared: 90% of our notification payloads were under 2KB, meaning the payload savings were negligible, but the engineering overhead to set up Apollo Server would delay launch by two weeks. I proposed we stick with REST for V1, but implement sparse fieldsets so the mobile client could specify exactly which fields it wanted, mimicking the benefit of GraphQL." Result: "The iOS engineer agreed with the compromise. We launched the REST API on time, and the mobile app achieved its payload size goals without the added complexity." Learnings: "I learned that technical disagreements are rarely about the technology itself; they are about underlying constraints. By addressing their core constraint (payload size) with data rather than arguing about REST vs. GraphQL conceptually, we reached consensus much faster."

Example 3: Navigating Ambiguity (Google Googleyness)

Question: "Describe a time you were assigned a project with vague requirements."

Situation: "I was given an initiative called 'improve developer onboarding.' The only context I received was that new hires were taking too long to make their first commit." Task: "I needed to define what 'too long' meant, identify the bottlenecks, and reduce the time-to-first-commit metric." Action: "Instead of guessing what to build, I interviewed our 5 most recent hires. I discovered the bottleneck wasn't the codebase; it was setting up local environments, which took three days due to outdated documentation. I completely scrapped the idea of building a new onboarding portal. Instead, I containerized our entire local development environment into a single Docker Compose file. I wrote a one-line setup script and recruited two new hires to test it, refining it based on where they got stuck." Result: "We reduced the average time-to-first-commit from 3.5 days to 4 hours (a 95% reduction). Engineering leadership later adopted my Docker configuration as the company-wide standard." Learnings: "I learned that ambitious-sounding projects often have incredibly simple solutions if you talk to the end-users first. I now start every ambiguous project with user interviews before writing a single line of code."

The Most Common STAR Mistakes

- Using "We" instead of "I". Interviewers cannot hire a team; they can only hire you. If you say "we scaled the database," the interviewer doesn't know if you architected the solution or just attended the meeting. Say "I scaled the database."

- Skipping the Results. A story without a measurable result is just an anecdote. You must quantify your impact. Look up the metrics before the interview if you have to.

- Spending too much time on the Situation. The interviewer does not care about your old company's business model. Get to the Action within 30 seconds.

- Failing to prepare for follow-ups. A good interviewer will immediately ask a probing question like, "Why did you choose Docker instead of Vagrant in that specific scenario?" You must be able to defend the technical decisions in your story.

When to Use the STAR Method

You should use the STAR method anytime an interviewer asks a behavioral question. These questions typically start with:

- "Tell me about a time when..."

- "Give me an example of..."

- "Have you ever a situation where..."

- "Describe a scenario where you..."

The best way to prepare is to build a grid. Write down 8 to 10 of your best professional stories using the STAR-L format. Then, map those stories to core competencies like Leadership, Overcoming Failure, Conflict, and Navigating Ambiguity. This ensures you have a structured, high-impact answer ready for almost any prompt.

If you struggle to deliver these stories concisely under pressure, tools like PracHub allow you to practice your STAR answers with an AI interviewer tuned to FAANG standards, providing real-time feedback on your pacing, "I" vs "We" usage, and result quantification.

Frequently Asked Questions

What does the STAR acronym stand for?

STAR stands for Situation, Task, Action, and Result. It is a structured framework used to answer behavioral interview questions by clearly describing a past experience, exactly what your role was, the specific steps you took to solve the problem, and the quantifiable business outcome of your work.

Is the STAR method still relevant in 2026?

Yes, the STAR method is the absolute standard for behavioral interviews at almost all major tech companies, including Amazon, where it is strictly required. However, for senior roles, candidates are now expected to use the STAR-L method, which adds a "Learnings" section to the end of the response to demonstrate self-awareness and growth.

What is the difference between Task and Action in the STAR method?

The "Task" is the goal or objective you were assigned (e.g., "I needed to reduce server costs by 20%"). The "Action" is the specific set of steps you took to achieve that goal (e.g., "I migrated our EC2 instances to AWS Graviton processors and implemented auto-scaling rules"). The Action section should make up 50% of your total answer.

How do I prepare STAR stories for an interview?

Do not try to memorize 50 different answers for 50 different questions. Instead, prepare 8-10 highly versatile STAR-L stories from your past experience. Ensure each story includes a quantifiable result. You can then adapt these core stories to fit various behavioral questions regarding conflict, leadership, failure, or innovation.

Does Google use the STAR method?

Yes, Google heavily relies on behavioral interviewing alongside technical rounds to assess "Googleyness" and leadership. Google recruiters actively recommend candidates use the STAR method to structure their responses, especially when answering questions about navigating ambiguity, cross-functional collaboration, and overcoming technical failures.

Comments (0)